|

Introduction

Many things could change when tracking an unknown object, including shape, appearance,

scale, background as well as camera motion. Because of these variations, and

because of the inherent inaccuracies in each tracking step, errors accumulate

and drifting occurs. This makes it very challenging to track something

for a long period of time.

We re-cast tracking as a figure/ground segmentation problem, separating

foreground object from background in each frame. Temporal coherence cues

roughly locate the spatial support of the object; static image cues refine the

support, correct errors and compute an accurate object mask.

Temporal coherence cues

Every tracking paradigm maintains two types of knowledge about the object being tracked: what it is, being an image patch or a histogram of color and texture or its shape/contour; and where it is, estimated either at low-level (e.g. optical flow) or high-level (e.g. dynamics models).

For the what question, we take a simple approach, and maintain two

histograms of color/brightness values, one for the figure and one for the

background.

For the where question, many existing approaches track object center

only, by assuming that the object has a rectangular (or ellptical) isupport.

We go further and ask for a segmentation of figure vs background.

The where question becomes the following: given a figure mask in the

previous frame, use image information to estimate a figure mask in the current

frame. To do this, we compute CDT superpixels for

both frames and compute a correspondence/assignment between the two sets of

superpixels/regions, based on color and location. This

correspondence/assignment distributes region mass in the previous frame to the

current frame, hence carrying the figure mask in the previous frame into an

estimate of figureness for the current frame.

Combining static image cues

The temporal coherence cues give us a rough estimate of where the figure is in

the current frame. It is, however, never accurate, and errors are introduced,

resulting in blurry figure support.

We use static image cues in the current frame to correct these errors. Static

image grouping cues are summarized in a boundary map produced by the Pb

operator, combining brightness, color and texture contrasts. We combine

temporal coherence cues and static image cues in a conditional random field model to produce a final

figure mask.

Accurate figure and background masks make the template update problme easy,

with interference from clutter reduced to a minimum. We update figure/ground

histograms and scale paramters linearly.

Segmentation Makes Tracking Robust

As we will show in our demo results, segmentation can help deal with many

challenges in tracking, such as variations in appearance, pose, scale or

background scene.

|

|

| Frame 1

| Frame 2

|

In this toy example, suppose we want to track a black object over a white

background. Segmentation is trivial in such a case, and we can "track" this

object whatever shape, scale or shade it changes into in Frame 2.

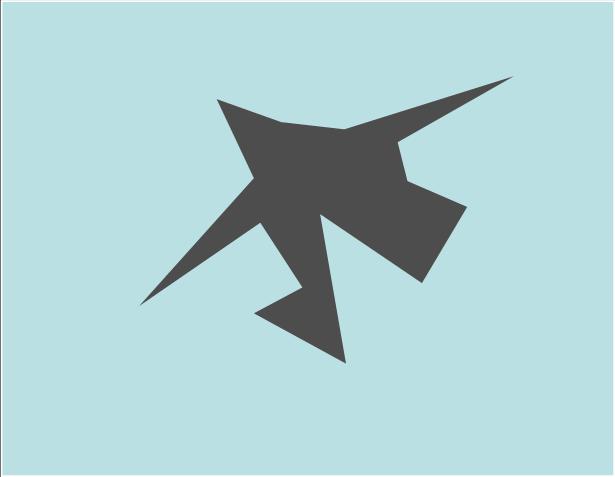

In this real-world example, we can track and (roughly) segment out the player

despite the crowded scene and partial occlusion. Segmentation in this case

works by combining multiple sources of cues: for region 1, appearance cue tells

us that it's not part of the figure; for region 2, spatial coherence cue tells

us that it is too far away; for region 3, it is close to the player and has the

"right" color, but there exists a strong contrast boundary near the player's

leg; for region 4, both boundary contrast and appearance cues are effective.

Sample Results

Video

Full tracks are available, encoded in xvid/divx4 codec.

|